Most enterprises now run a dozen AI initiatives in parallel. A copilot in the ticketing system. A RAG bot trained on the wiki. An agent that drafts SOWs. A chat interface bolted onto the data warehouse. Each one ships, each one demos well, and almost none of them compound into anything an operations leader can actually run.

The bottleneck is not model quality. It is the absence of an operating layer.

What an "operating system" means here

An operating system gives a fleet of programs three things: a way to be built and deployed, a shared memory of state, and a way to be monitored and governed. Without it, every program is an island.

Enterprise AI today looks the same. Without an operating layer:

Every agent is built from scratch, with its own connectors, prompts, and tool definitions.

Every conversation forgets the last one. Knowledge does not compound.

Every team rebuilds its own RBAC, audit, and policy story.

Every executive question becomes a separate analytics project.

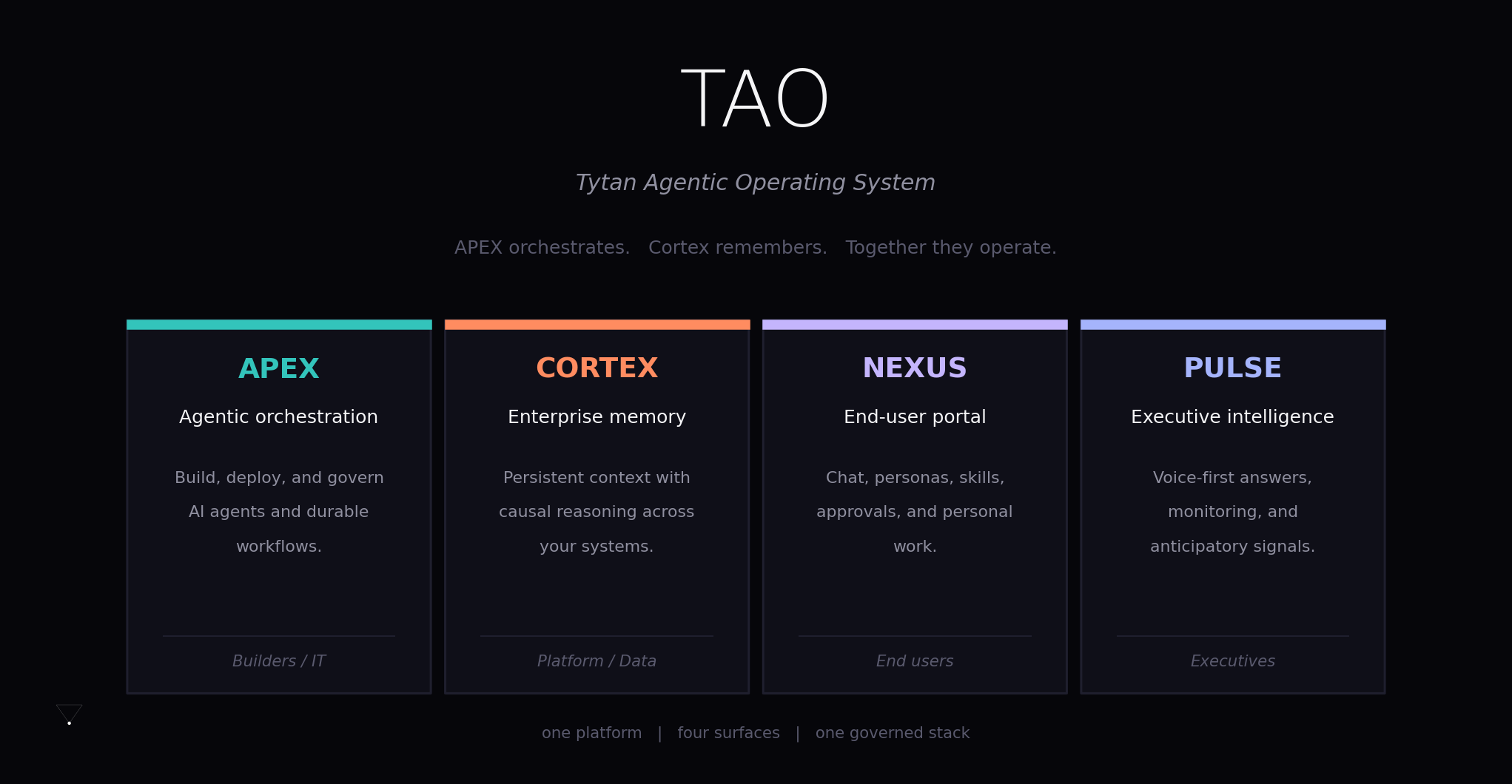

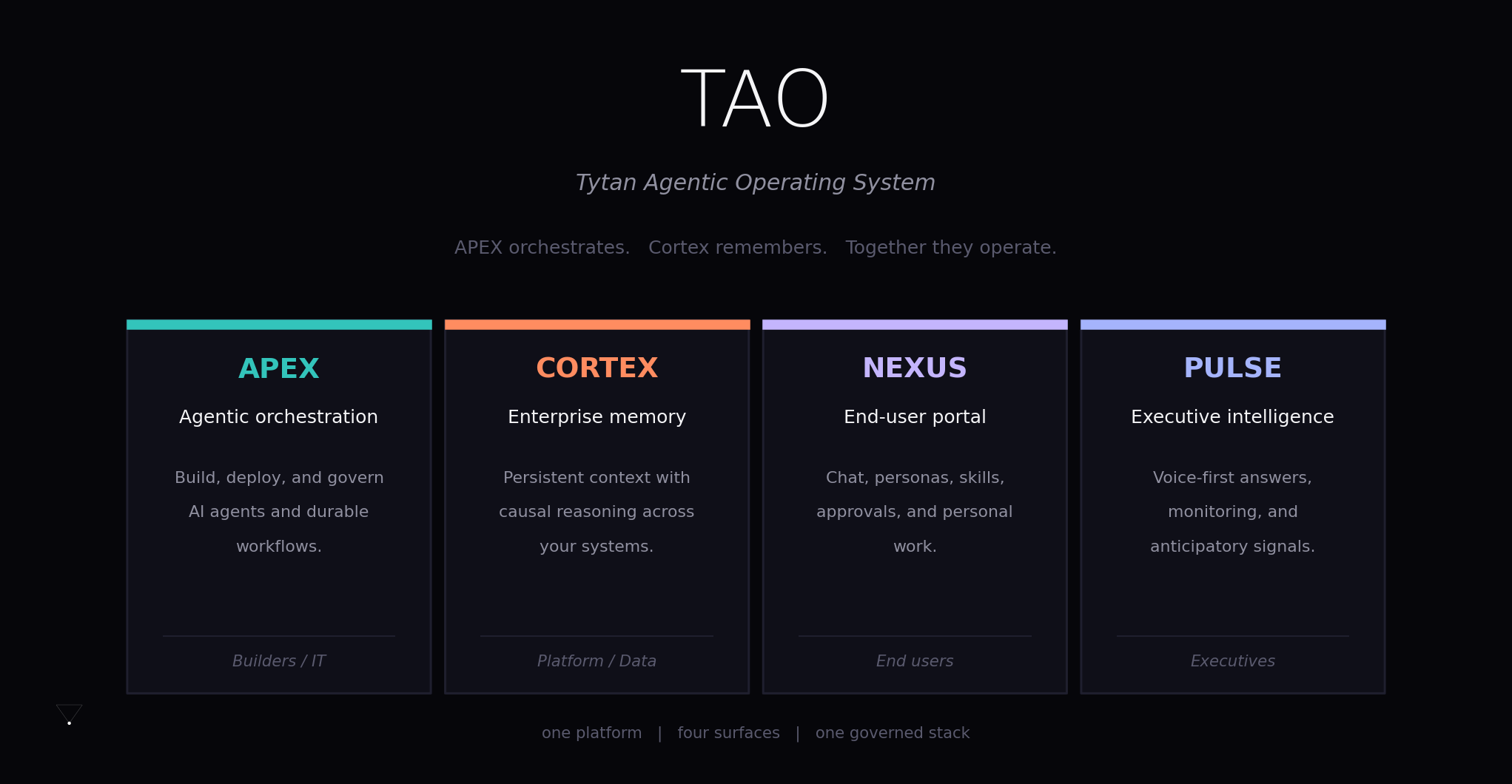

TAO is the operating system for that fleet. One platform, four surfaces, one governed stack.

Four surfaces, four audiences

TAO is organised around four user-facing surfaces. Each maps to a different audience and a different category in the platform's permission model.

APEX is the build-and-operate surface for engineers, IT, and platform teams. It is the agent factory and the visual workflow engine. You design agents with configurable models, tools, knowledge, and memory. You compose them into durable DAG workflows with retry policies, human-approval gates, and release gates. APEX is where automation gets authored, versioned, and put into production.

Cortex is the memory and causal-reasoning layer. It is the difference between an AI that retrieves and an AI that remembers. More on this in a moment.

Nexus is the end-user surface, where business users actually do work. Chat. Personas. Skills. Approvals queues. A personal "My Work" view. The point of Nexus is that the agents your platform team builds in APEX have somewhere to land that an end user can use without training.

Pulse is the executive surface. Voice-first. Anticipatory. Designed to answer questions like "why did Q3 miss" or "what is putting our largest accounts at risk" with citations, not vibes.

Same platform underneath. Different lens for each audience.

APEX: orchestration with guardrails

APEX is what most vendors mean when they say "agent platform." TAO's bet is that the orchestration layer has to be built like an enterprise system from day one, not retrofitted.

That means:

A durable DAG engine with checkpoints, so a 4-hour workflow that fails on hour three resumes from hour three, not from the beginning.

20+ node types covering agents, tool calls, branches, loops, fan-out, human approvals, and release gates.

Per-node retry policies and structured outputs.

A multi-provider model router (OpenAI, Anthropic, Azure OpenAI, Ollama) that selects on latency, cost, and quality.

Sandboxed tool execution with a credential vault, Fernet-encrypted, per user.

Built-in connectors for HTTP, Postgres, Jira, ServiceNow, Email, and Teams. MCP for the rest.

Nothing on this list is a feature you would skip. They are the things that turn a demo into a production agent.

Cortex: where the real differentiation lives

The most repeated word in AI sales decks today is "RAG." Retrieval augmented generation has become the default answer to enterprise context. It is also the shallowest possible answer.

RAG is stateless lookup. Each query starts cold, asks a vector store for similar text passages, and stitches them into a prompt. Useful, but it forgets. It does not know what was true last quarter versus what is true today. It cannot tell you why a number moved. It does not remember the decisions your team has already made.

Cortex is a memory store with six classified memory types: episodic, semantic, temporal, causal, procedural, and policy. It is bi-temporal, so it knows both when something was recorded and when it was true. It builds a causal graph using Granger causality and regime detection, so relationships between metrics change with the business cycle. And it stamps every memory with HMAC-signed provenance, so an answer can be traced back to source records and verified.

The slogan reads: RAG retrieves facts. Cortex understands consequences.

The implementation detail that makes this work is signal compression. Raw enterprise data stays where it lives, in the source systems. Cortex stores only deviations and signals, the things a senior analyst would carry around in their head. On a representative dataset (a $180M ARR discrete manufacturer with 14.5 million source records) Cortex compresses to roughly 2,730 memories. That is a compression ratio near 5,300:1.

You do not need a new data warehouse. You need a different idea of what is worth remembering.

What this looks like to an executive

Pulse is where causal memory pays off as an executive experience. The wedge demo is one question:

"Why did Q3 miss?"

The answer arrives in under five seconds, with a chain of evidence the CFO can audit.

The chain reads as a story, but each link is a typed causal edge with a detected lag, a confidence score, and a cryptographic signature linking it back to source events. On-time-in-full collapsed in April. Quality on Line 3 slipped through May. Acme's CSAT score followed. Their renewal flagged red in June. Q3 ARR came in $4.2M short in September.

This is the same data your operations and customer-success and finance teams already have. What changes is that the relationships between the data are recorded, not reconstructed in a slide deck three months after the fact.

Pulse turns Cortex into an early-warning system. The same machinery that explains a miss after the fact can flag a forming pattern 60 to 90 days earlier. That is the difference between AI as reporting and AI as operations.

Nexus: where the work actually happens

A platform that only an architect can use is not an enterprise platform. Nexus is the surface for everyone else. It is where a sales lead opens a chat, talks to a persona configured by their RevOps team, and gets answers grounded in their territory and their accounts. It is where an analyst sees an approvals queue from agents acting on their behalf. It is where memory built up over months of conversations is browseable, correctable, and confirmable.

If APEX is where you build agents and Pulse is where executives ask the hardest questions, Nexus is where the daily work compounds. Every confirmation an end user gives, every correction, every approved action feeds back into Cortex. The platform learns by being used.

The stack underneath

TAO is not a monolith. It is a layered architecture of FastAPI microservices behind a React portal, with PostgreSQL plus pgvector as the primary store, ChromaDB for embeddings, Redis for sessions and queues, and OpenTelemetry running through every layer.

The layers are stacked so that nothing operates outside the governance and observability planes. Every model call goes through the policy engine. Every agent run is traced. Every cost is attributed. RBAC is hierarchical with dotted paths, so permissions resolve from category to subcategory to page, with admin on a parent implying admin on every descendant.

It is multi-tenant from the bottom up: organisation contains workspaces, workspaces contain projects, every row in every table is tenant-scoped. Helm charts and Kustomize overlays ship for staging and production. The cluster you run TAO on is the cluster your security team already trusts.

This is what people mean when they say "audit-grade by default."

The bottom line for an executive

If you are a CIO or a VP betting on enterprise AI in 2026, the question is no longer whether AI works. It is whether your AI compounds.

Three things have to be true for that to happen:

One platform, not twelve. Agents, workflows, memory, governance, and observability have to live in the same operated stack. Otherwise every initiative pays its own integration tax.

Memory that survives the conversation. Stateless RAG is a feature, not a strategy. Causal, governed, provenance-signed memory is what turns a copilot into an institution.

A surface for every audience. Builders, end users, and executives need different lenses on the same system. A platform that serves only one of them gets shelfware-sorted by the other two.

That is the design TAO is built around. APEX orchestrates. Cortex remembers. Nexus is where the work happens. Pulse is where the answers land.

Together, they operate.

Want to see the "Why did Q3 miss?" demo on your own data? Get in touch.